Keeping up with an industry as fast-moving as AI is a tall order. So until an AI can do it for you, here’s a handy roundup of recent stories in the world of machine learning, along with notable research and experiments we didn’t cover on their own.

By the way — TechCrunch plans to launch an AI newsletter soon. Stay tuned.

This week in AI, eight prominent U.S. newspapers owned by investment giant Alden Global Capital, including the New York Daily News, Chicago Tribune and Orlando Sentinel, sued OpenAI and Microsoft for copyright infringement relating to the companies’ use of generative AI tech. They, like The New York Times in its ongoing lawsuit against OpenAI, accuse OpenAI and Microsoft of scraping their IP without permission or compensation to build and commercialize generative models such as GPT-4.

“We’ve spent billions of dollars gathering information and reporting news at our publications, and we can’t allow OpenAI and Microsoft to expand the big tech playbook of stealing our work to build their own businesses at our expense,” Frank Pine, the executive editor overseeing Alden’s newspapers, said in a statement.

The suit seems likely to end in a settlement and licensing deal, given OpenAI’s existing partnerships with publishers and its reluctance to hinge the whole of its business model on the fair use argument. But what about the rest of the content creators whose works are being swept up in model training without payment?

It seems OpenAI’s thinking about that.

A recently-published research paper co-authored by Boaz Barak, a scientist on OpenAI’s Superalignment team, proposes a framework to compensate copyright owners “proportionally to their contributions to the creation of AI-generated content.” How? Through cooperative game theory.

The framework evaluates to what extent content in a training data set — e.g. text, images or some other data — influences what a model generates, employing a game theory concept known as the Shapley value. Then, based on that evaluation, it determines the content owners’ “rightful share” (i.e. compensation).

Let’s say you have an image-generating model trained using artwork from four artists: John, Jacob, Jack and Jebediah. You ask it to draw a flower in Jack’s style. With the framework, you can determine the influence each artists’ works had on the art the model generates and, thus, the compensation that each should receive.

There is a downside to the framework, however — it’s computationally expensive. The researchers’ workarounds rely on estimates of compensation rather than exact calculations. Would that satisfy content creators? I’m not so sure. If OpenAI someday puts it into practice, we’ll certainly find out.

Here are some other AI stories of note from the past few days:

- Microsoft reaffirms facial recognition ban: Language added to the terms of service for Azure OpenAI Service, Microsoft’s fully managed wrapper around OpenAI tech, more clearly prohibits integrations from being used “by or for” police departments for facial recognition in the U.S.

- The nature of AI-native startups: AI startups face a different set of challenges from your typical software-as-a-service company. That was the message from Rudina Seseri, founder and managing partner at Glasswing Ventures, last week at the TechCrunch Early Stage event in Boston; Ron has the full story.

- Anthropic launches a business plan: AI startup Anthropic is launching a new paid plan aimed at enterprises as well as a new iOS app. Team — the enterprise plan — gives customers higher-priority access to Anthropic’s Claude 3 family of generative AI models plus additional admin and user management controls.

- CodeWhisperer no more: Amazon CodeWhisperer is now Q Developer, a part of Amazon’s Q family of business-oriented generative AI chatbots. Available through AWS, Q Developer helps with some of the tasks developers do in the course of their daily work, like debugging and upgrading apps — much like CodeWhisperer did.

- Just walk out of Sam’s Club: Walmart-owned Sam’s Club says it’s turning to AI to help speed up its “exit technology.” Instead of requiring store staff to check members’ purchases against their receipts when leaving a store, Sam’s Club customers who pay either at a register or through the Scan & Go mobile app can now walk out of certain store locations without having their purchases double-checked.

- Fish harvesting, automated: Harvesting fish is an inherently messy business. Shinkei is working to improve it with an automated system that more humanely and reliably dispatches the fish, resulting in what could be a totally different seafood economy, Devin reports.

- Yelp’s AI assistant: Yelp announced this week a new AI-powered chatbot for consumers — powered by OpenAI models, the company says — that helps them connect with relevant businesses for their tasks (like installing lighting fixtures, upgrading outdoor spaces and so on). The company is rolling out the AI assistant on its iOS app under the “Projects” tab, with plans to expand to Android later this year.

More machine learnings

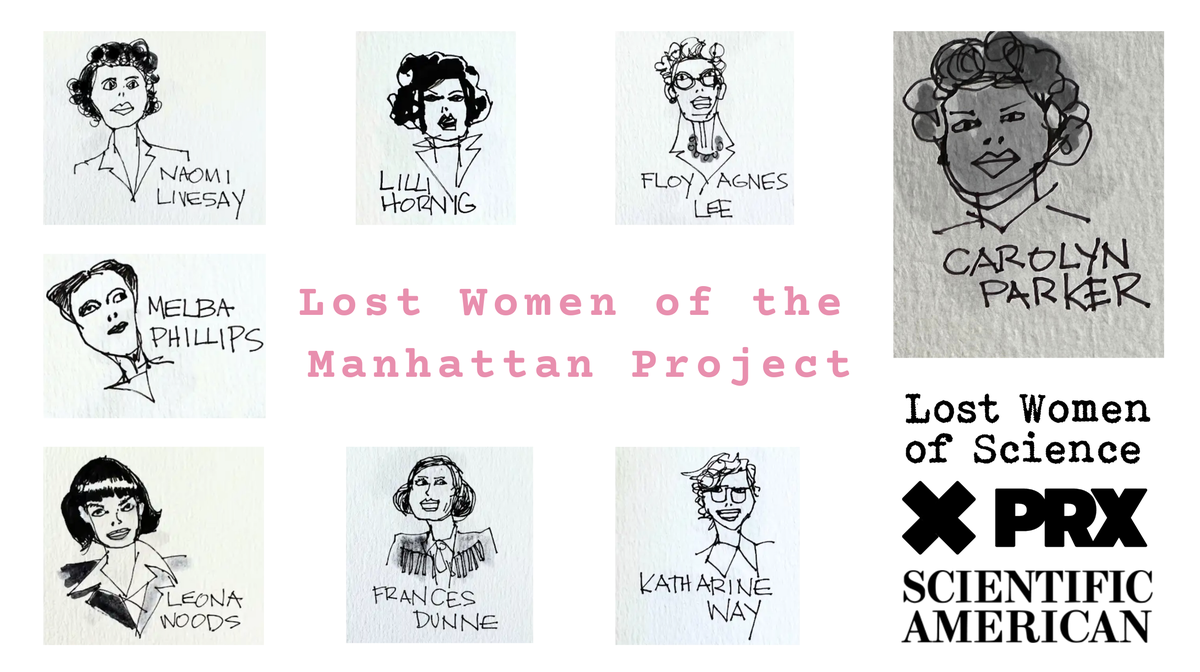

Image Credits: US Dept of Energy

Sounds like there was quite a party at Argonne National Lab this winter when they brought in a hundred AI and energy sector experts to talk about how the rapidly evolving tech could be helpful to the country’s infrastructure and R&D in that area. The resulting report is more or less what you’d expect from that crowd: a lot of pie in the sky, but informative nonetheless.

Looking at nuclear power, the grid, carbon management, energy storage, and materials, the themes that emerged from this get-together were, first, that researchers need access to high-powered compute tools and resources; second, learning to spot the weak points of the simulations and predictions (including those enabled by the first thing); third, the need for AI tools that can integrate and make accessible data from multiple sources and in many formats. We’ve seen all these things happening across the industry in various ways, so it’s no big surprise, but nothing gets done at the federal level without a few boffins putting out a paper, so it’s good to have it on the record.

Georgia Tech and Meta are working on part of that with a big new database called OpenDAC, a pile of reactions, materials, and calculations intended to help scientists designing carbon capture processes to do so more easily. It focuses on metal-organic frameworks, a promising and popular material type for carbon capture, but one with thousands of variations, which haven’t been exhaustively tested.

The Georgia Tech team got together with Oak Ridge National Lab and Meta’s FAIR to simulate quantum chemistry interactions on these materials, using some 400 million compute hours — way more than a university can easily muster. Hopefully it’s helpful to the climate researchers working in this field. It’s all documented here.

We hear a lot about AI applications in the medical field, though most are in what you might call an advisory role, helping experts notice things they might not otherwise have seen, or spotting patterns that would have taken hours for a tech to find. That’s partly because these machine learning models just find connections between statistics without understanding what caused or led to what. Cambridge and Ludwig-Maximilians-Universität München researchers are working on that, since moving past basic correlative relationships could be hugely helpful in creating treatment plans.

The work, led by Professor Stefan Feuerriegel from LMU, aims to make models that can identify causal mechanisms, not just correlations: “We give the machine rules for recognizing the causal structure and correctly formalizing the problem. Then the machine has to learn to recognize the effects of interventions and understand, so to speak, how real-life consequences are mirrored in the data that has been fed into the computers,” he said. It’s still early days for them, and they’re aware of that, but they believe their work is part of an important decade-scale development period.

Over at University of Pennsylvania, grad student Ro Encarnación is working on a new angle in the “algorithmic justice” field we’ve seen pioneered (primarily by women and people of color) in the last 7-8 years. Her work is more focused on the users than the platforms, documenting what she calls “emergent auditing.”

When Tiktok or Instagram puts out a filter that’s kinda racist, or an image generator that does something eye-popping, what do users do? Complain, sure, but they also continue to use it, and learn how to circumvent or even exacerbate the problems encoded in it. It may not be a “solution” the way we think of it, but it demonstrates the diversity and resilience of the user side of the equation — they’re not as fragile or passive as you might think.